Angela Oduor Lungati on rethinking openness in the age of AI

Author: Angela Oduor Lungati

For years, we’ve heard the following phrases frequently:

Data is the new oil

Data is the new currency

But recently, while co-hosting the Coded Bias Nairobi Tour event with the Algorithmic Justice League and Baraza Media Lab, a different phrase stayed with me. Dr Joy Buolamwini [Fellow of the Institute for Ethics in AI, Accelerator Fellowship Programme] described data as something else entirely.

“Data is destiny”

That framing changes the conversation. It moves data away from being something to extract and towards something deeply human. Data doesn’t just describe our world; it increasingly determines how decisions about us are made.

So, a harder question emerges:

Who gets to shape that destiny?

For over 19 years, the work we’ve done at Ushahidi has been grounded in a simple belief: lived experiences count as expertise. Communities closest to a problem often understand it best; they’ve simply lacked the infrastructure to make that knowledge visible in decision-making spaces.

We’ve built tools to help people share what’s happening around them in real time during elections, disasters, public health crises, and climate adaptation efforts. Time and again, community-generated data have revealed patterns that decision-makers and wider institutions have missed.

But the role of data is changing. In today’s AI era, data doesn’t only inform decisions; it trains systems that make them.

Recent assessments suggest Africa contributes less than 1% of global AI research output despite representing roughly 18% of the world’s population. Predictably, many systems struggle to understand African languages, contexts, and social realities because of these data gaps.

Representation matters, and better representative datasets are part of the solution.

But that is not the whole story.

The Paradox of Inclusion

Across the continent and beyond, data is often collected in the name of innovation and development without meaningful participation or protection for the people who generate it. Local knowledge, behavioural patterns, and even biometric information are captured and used to train global AI systems, systems communities have little stake in shaping and limited ability to access or benefit from. African language data, for example, has often been crowdsourced or scraped to build models that remain inaccessible to the very communities that made them possible.

We now face a defining paradox:

We need more representative data to make AI systems fairer. But collecting and sharing data can also deepen exploitation, inadvertently facilitating data extractivism.

Ushahidi’s Dilemma

For us at Ushahidi, this tension is deeply personal.

Since 2008, communities around the world have trusted our platforms to share their lived experiences with us, be it about violence, governance failures, environmental risks, and more.

As AI continues to proliferate in the ecosystems we operate in, an obvious question has emerged:

Should we open these datasets to help improve representation in AI systems?

Our instinct, shaped by years in open source and open data movements, was, and still is, yes.

But we’ve come to recognise that the assumptions that shaped openness on the web do not fully hold in the age of AI (as others in the wider knowledge commons are coming to realise as well, such as Creative Commons in this recent post on how to keep the internet human). Releasing data without safeguards could expose communities to harm, allow value to be extracted without return, and remove agency from the very people whose experiences made the data meaningful.

The question is no longer simply whether data should be open, but whether openness itself must evolve.

The Next Question

Over the years, Ushahidi’s work has been guided by a series of questions:

How do we help people raise their voices to influence decision-making?

How do we transform those raised voices into meaningful insight?

How do we make sure that technology serves communities rather than institutions alone?

Now, a new question sits in front of us:

How do we share data in ways that preserve agency, protect people, and ensure value flows back to the communities who generated it?

This is not just a technical challenge. It’s a governance challenge.

What’s needed today are new licensing approaches, community governance models, and norms that define ethical and responsible re-use, especially when data is being used to train autonomous systems.

Towards a Responsible Data Commons

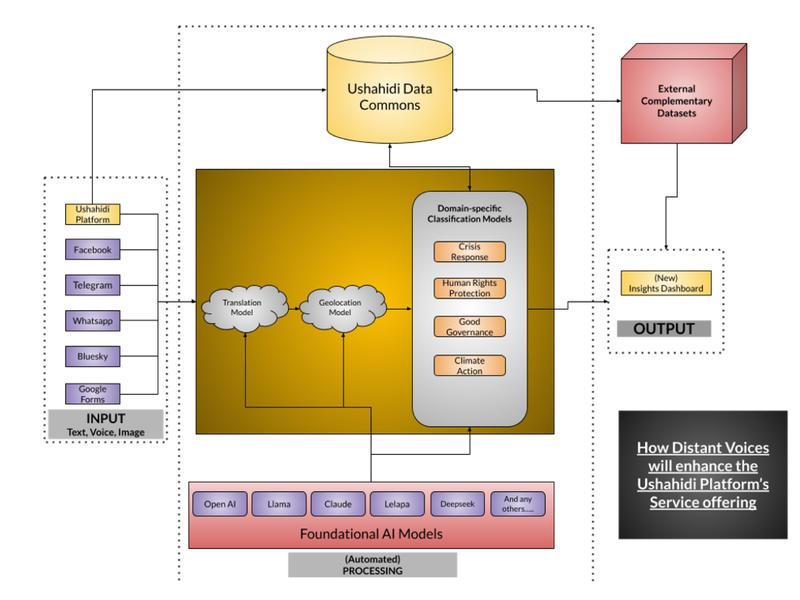

Ushahidi is embarking on an exciting journey to launch Distant Voices, an evolution of our product and service offering to enable people to quickly gather data and generate insights from it to tackle issues that matter most to them.

A key component of this vision is the creation of a data commons that can both improve representation in AI systems and enable deeper insight by learning from historic collective intelligence across sectors and geographies. The challenge is doing this responsibly.

As an accelerator fellow with Oxford University’s Institute for Ethics in AI, I’ll be exploring what structures would enable Ushahidi deployers to share their datasets — and how Ushahidi can open them while preserving dignity and agency.

We’re exploring:

- Community governance models that should exist before datasets are shared

- Alternative licensing frameworks to prevent exploitative use

- Benefit-sharing mechanisms that might work in practice

- And responsibilities organisations should carry once data trains AI systems

A New Phase for the Open Movement?

Open data and open source helped shape the modern internet. They made participation possible.

AI now forces us to ask a deeper question:

Participation on whose terms?

If data truly is destiny, then communities must have a say in how that destiny is written — not only when data is collected, but when it is reused and scaled beyond its original context.

This work is one step towards building an ecosystem where data does not merely include people, but respects them.

Because the future of open technology will not be defined only by access. It will be defined by agency.